Vulnerability researchers at Google’s Project Zero have introduced Naptime, a new framework that will be used to enable a large language model (LLM) to conduct vulnerability research.

The Naptime initiative started in mid-2023 and aims to improve vulnerability discovery approaches, with a particular focus on automating variant analysis.

It is called Project Naptime “because of the potential for allowing us to take regular naps while it helps us out with our jobs,” Project Zero’s Sergei Glazunov and Mark Brand wrote in a blog post on 20 June.

How Google Naptime Works

The objective of the Naptime framework is to enable an LLM to perform vulnerability research that closely mimics human security experts' iterative, hypothesis-driven approach.

“This architecture not only enhances the agent's ability to identify and analyze vulnerabilities but also ensures that the results are accurate and reproducible,” Glazunov and Brand said.

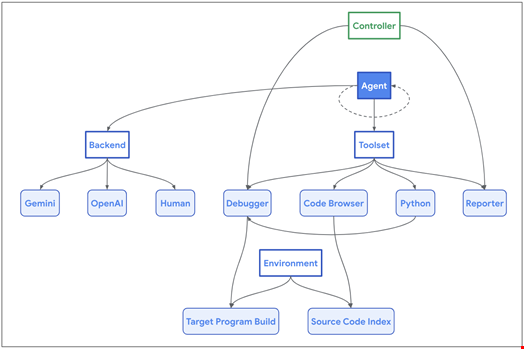

The framework’s architecture is centered around the interaction between an AI agent and its set of specialized tools designed to mimic the workflow of a human security researcher and a target codebase.

These tools include:

- The Code Browser enables the agent to navigate through the target codebase, much like how engineers use Chromium Code Search

- The Python enables the agent to run Python scripts in a sandboxed environment for intermediate calculations and to generate precise and complex inputs to the target program

- The Debugger allows the agent to interact with the program and observe its behavior under different inputs. To ensure consistent reproduction and easier detection of memory corruption issues, the program is compiled with AddressSanitizer, and the debugger captures various signals indicating security-related crashes

- The Reporter provides a structured mechanism for the agent to communicate its progress

- When triggered by the AI agent, the Controller verifies if the success condition (typically a program crash) is met. It also allows the agent to abort the task when unable to make further progress, preventing stagnation

Google’s Project Zero stated that the framework is model-agnostic and backend-agnostic. The researchers wrote that it can even be used by human agents to generate successful trajectories for model fine-tuning.

Top Scores at Meta’s CyberSecEval2 Benchmark

The Naptime framework builds on a set of guiding principles established by Google’s Project Zero to improve the performance of multi-purpose LLMs in vulnerability discovery.

These principles were developed following the launch by security researchers at Meta of CyberSecEval2, the latest LLM benchmark for discovering and exploiting memory safety issues.

“We've implemented these principles in our LLM-powered vulnerability research framework, which increased CyberSecEval2 benchmark performance by up to 20x from the original paper,” Glazunov and Brand wrote.

For instance, the Project Zero researchers carried out two series of the CyberSecEval2 tests, ‘Advanced Memory Corruption’ and ‘Buffer Overflow,’ with GPT 4 Turbo as the AI agent and the rest of the Naptime tools. They achieved new top scores of 1.00 on the ‘Buffer Overflow’ tests (from 0.05 in the Meta paper) and 0.76 on the ‘Advanced Memory Corruption’ tests (from 0.24 in the Meta paper).

“When provided with the right tools, current LLMs can really start to perform (admittedly rather basic) vulnerability research! However, there's a large difference between solving isolated capture the flag-style challenges without ambiguity (there's always a bug, you always reach it by providing command line input, etc.) and performing autonomous offensive security research,” said the Project Zero researchers.

They believe the security community will also need to develop more difficult and realistic benchmarks to efficiently monitor the progress of such initiatives.

Read more: Academics Develop Testing Benchmark for LLMs in Cyber Threat Intelligence