Advances in generative AI have changed the economics of impersonation. Attackers no longer need to steal credentials or compromise infrastructure; they can simply pretend to be the user. Synthetic video, cloned voices, and high-quality biometric spoofs have made identity-based attacks faster, cheaper, and far more convincing.

As the World Economic Forum has noted, tools to copy a voice, image, or video are now widely accessible with minimal technical skill, and attacks are rising sharply.

Biometric authentication is often pitched as the fix, but it only checks whether the biometric matches, not whether there's a real person behind it. Without liveness detection, a convincing spoof is indistinguishable from the real thing.

Liveness detection closes that gap by verifying that the biometric comes from a live human interacting in real time, not a recording, render, or replay. To understand its value, it is important to look at the specific attack techniques it is designed to stop.

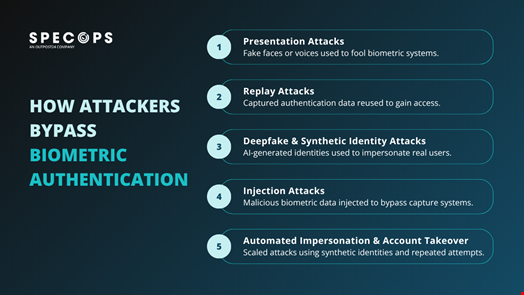

Presentation Attacks

Presentation attacks are the simplest way to bypass biometric systems, using fake versions of a user’s face or voice to fool sensors.

Techniques range from printed photos and screen images to high-resolution video playback and realistic physical masks. As biometric systems become more widely deployed, the quality of these spoofing techniques continues to improve.

Without liveness detection, systems that rely purely on matching visual or audio patterns can accept these inputs as legitimate. The attack succeeds because the system cannot distinguish between a real person and a convincing replica. Liveness detection adds checks focused on physical presence, analyzing depth, movement, and natural variation, or requiring real-time interaction to make static or pre-recorded inputs much harder to pass off as genuine.

Replay Attacks

Replay attacks take a different approach. Instead of creating a fake biometric, attackers capture a legitimate authentication attempt and reuse it, either by recording a login session or intercepting biometric data in transit. Because the data itself is genuine, traditional systems will accept it without question.

Without verifying when and how the biometric was captured, the system cannot tell whether it is being reused. Liveness detection addresses this by enforcing real-time validation through time-sensitive prompts, dynamic challenges, and continuous analysis, ensuring that previously captured data cannot simply be replayed.

Deepfake and Synthetic Identity Attacks

The most significant shift in recent years has been the rise of AI-generated impersonation. Attackers can now generate realistic facial movements and voice patterns that closely mimic a target individual. In some cases, this can be done in real time, allowing attackers to interact with systems or service desk agents as legitimate users.

The Arup case from early 2024 illustrates how convincing this can be. A finance employee at the engineering firm's Hong Kong office joined a video call with what appeared to be the CFO, but it was an AI-generated deepfake. The employee was persuaded to transfer around $25m before discovering the fraud. No systems were breached and no credentials were stolen. The attackers simply impersonated people the employee trusted.

These attacks are effective in remote environments, where there is no physical verification and trust is placed in digital signals. A convincing deepfake can pass visual inspection and basic biometric checks if those checks focus only on matching features.

Liveness detection provides a layer of defense by analyzing behavioral and environmental signals that are difficult to reproduce consistently. Subtle inconsistencies in timing, lighting, or movement can indicate synthetic input. Challenge-response mechanisms also require immediate and unpredictable reactions that are difficult to generate convincingly on demand.

While AI capabilities continue to evolve, liveness detection forces attackers to move beyond appearance and replicate real-time human behavior, which increases the complexity and cost of the attack.

Injection Attacks

In some cases, attackers bypass the biometric capture process entirely by injecting data directly into the system. This can involve feeding pre-recorded video into an API or manipulating the device to submit stored biometric data instead of capturing live input.

The GoldPickaxe trojan is a good example of this type of attack. The malware targeted iOS and Android users in Thailand and Vietnam, prompting victims to complete facial recognition scans through apps disguised as government services. The captured biometric data was then used with AI face-swapping tools to generate deepfakes, allowing attackers to authenticate into victims' bank accounts. Uncovered by Group-IB in early 2024, it was the first documented case of malware purpose-built to harvest facial biometrics for this kind of fraud.

These attacks exploit weaknesses in how biometric systems are implemented, not the biometric itself. If the system trusts all incoming data as legitimate, it will accept malicious input without further checks. Liveness detection catches these anomalies by validating how the data is captured, not just what it contains.

Automated Impersonation and Account Takeover

Attackers can also run large-scale campaigns using a combination of stolen data, synthetic identities, and scripted interactions. In biometric workflows that lack liveness detection, these attacks can be repeated at scale using pre-generated inputs or recycled media.

Liveness detection introduces variability and interaction that disrupts this model. Each authentication attempt requires a live response, making it more difficult to automate effectively. While it does not eliminate the threat, it reduces the efficiency and scalability that make these attacks effective.

Applying Liveness Detection to High-Risk Identity Workflows

Liveness detection is most valuable in workflows where identity is being actively challenged. Account recovery, service desk verification, and onboarding are all points where attackers impersonate legitimate users rather than exploit technical vulnerabilities. In these scenarios, the key question is not whether the correct credentials are presented, but whether the person requesting access is legitimate.

Solutions such as Specops Verified ID apply liveness detection within these workflows, combining real-time biometric verification with identity validation to confirm user presence during critical actions like password resets and service desk interactions. This reduces reliance on knowledge-based checks and manual judgement, both increasingly vulnerable to social engineering and AI-driven impersonation.

Book a demo to see how Specops Verified ID fits into your identity workflows.