Google is betting big on agentic AI and wants professionals to track their AI agents on its new hub Gemini Enterprise Agent Platform.

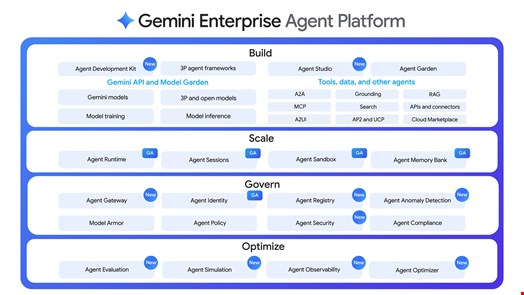

Introduced a few months after the launch of Gemini Enterprise, the Agent Platform is Google’s new hub to manage agentic AI workflows for both Google-made and external AI agents.

The platform aims to bring together with a series of existing and new capabilities. Among them, the Agent Platform enables users to assign every agent a unique cryptographic ID that will be referred to for every action an agent takes.

These agent IDs are designed to be mapped back to “defined authorization policies that are traceable and auditable,” said Thomas Kurian, Google Cloud’s CEO, speaking at the Google Cloud Next 26 conference, held in Las Vegas from April 22 to April 24.

“We’re bringing zero trust verification to every agent and at every orchestration step,” he added.

Tackling A New Class of Identity Risk

AI agents are poised to disrupt identity management for security professionals.

While traditional non-human identities (NHIs), such as API keys or service accounts, are deterministic, AI agents are autonomous and goal oriented. They are capable of understanding a high-level goal, breaking it down into steps and independently executing a series of actions across various applications to achieve that goal.

This introduces new types of dynamic digital entities which act on behalf of humans and make independent operational decisions.

Agent identities will be listed on Google Cloud’s Gemini Enterprise Agent Platform in Agent Registry, a central library that indexes every internal agent, tool and skill.

Finally, the Agent Platform will also feature Agent Gateway, a single dashboard to manage a fleet of AI agents. This includes enforcing policies for all agent-to-agent and agent-to-tool connections and interactions, supporting several agentic AI protocols like model context protocol (MCP) and Agent2Agent (A2A).

“It provides secure, unified connectivity between agents and tools across any environment, while enforcing consistent security policies and Model Armor protections to safeguard against prompt injection and data leakage,” said a Google Cloud statement published on April 22.

Model Armor is Google Cloud’s own guardrail layer against adversarial attacks, including prompt injection, sensitive data leaks and harmful content.

Francis deSouza, COO of Google Cloud, said that security teams need to identify agents, both authorized and unauthorized, used across their workforce.

"When you roll out authorized agents, you want to manage their access control, what they should have access to and that may change over time in a way that's more dynamic than human identities," he added.

Watch now: How AI and Verifiable Credentials Are Redefining Digital Identities

Agent Security Dashboard and Anomaly Detection Introduced

At Cloud Next 26, Google Cloud also unveiled Agent Anomaly Detection, a new feature which uses statistical models and a large language model (LLM) as-a-judge framework to identify unusual behavior in real time, flagging potential threats like suspicious reasoning patterns.

Anomaly Detection works alongside the existing Agent Threat Detection, which monitors malicious activities such as reverse shells and connections to known bad IP addresses.

Another addition is the Agent Security dashboard, powered by Google Cloud’s Security Command Center (SCC), which unifies threat detection and risk analysis within Google Cloud Platform (GCP) environments.

Google Cloud said this new dashboard will help security teams map relationships between AI agents and models, automate asset discovery and scan for vulnerabilities in operating systems and language packages.

These new capabilities build on Gemini Enterprise’s existing security tools, including Agent Compliance and Agent Policy, which already provide policy enforcement capabilities.

Google Cloud Pushes Deeper into Agentic AI and Cybersecurity

The Gemini Enterprise Agent Platform launch and release of Google’s new agentic AI security capabilities were among a flurry of Google Cloud announcements at Cloud Next 26.

Israeli cloud security firm Wiz, acquired by Google in 2025, has expanded its AI-Application Protection Platform (AI-APP) to embed security directly into developer workflows.

The updates bring real-time vulnerability scanning, AI-generated code security, a dynamic AI bill-of-materials (AIBOM) offering and automated remediation into platforms like AI development solution Lovable, integrated development environments (IDEs) and version control systems.

Google also released three new agents for cybersecurity professionals. The Threat Hunting agent aims to help security teams proactively hunt for novel attack patterns and stealthy adversary behaviors that bypass traditional defenses.

The Detection Engineering agent is designed to identify coverage gaps and create new detections for threat scenarios, reducing toil and transforming detection creation from a manual craft into an automated science. They are both available in preview mode.

Finally, coming soon to preview, Google’s Third-Party Context agent has been created to enrich security teams’ workflows with contextual data from third-party content.

When available, the three agents will be integrated into Google Security Operations, the firm’s security analytics, threat detection and incident response platform.

Google claimed that its Triage and Investigation agent, introduced in April 2025, processed over five million alerts in the last year, “reducing a typical 30-minute manual analysis to 60 seconds.”

Finally, Google released a new dark web intelligence feature in Google Threat Intelligence, now available in preview.

The tech firms said that internal tests showed the feature can analyze millions of daily external events with 98% accuracy to elevate threats that truly matter.

Google also launched two AI-focused processing chips, the Tensor Processing Unit 8t (TPU 8t) for AI training and the Tensor Processing Unit 8i for AI inference.

Finally, Google also committed to invest $750m in a new agentic AI partner fund available for global consulting firms, systems integrators, software partners and channel partners.

The fund’s goal is to support AI value identification, agentic AI prototyping, agent building and deployment and upskilling.

Read now: How Security Leaders Can Safeguard Against Vibe Coding Security Risks

Image credit: gguy / Shutterstock.com