Vibe coding tools like Anthropic's Claude Code are flooding software with new vulnerabilities, Georgia Tech researchers have warned.

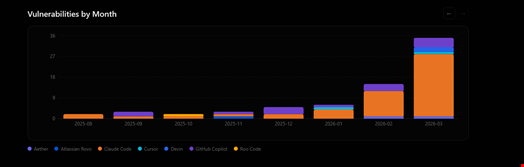

At least 35 new common vulnerabilities and exposures (CVE) entries were disclosed in March 2026 that were the direct result of AI-generated code. This is up from from six in January and 15 in February.

The vulnerabilities are being tracked as part of the ‘Vibe Security Radar’ project which was started in May 2025 by the Systems Software & Security Lab (SSLab), part of Georgia Tech’s School of Cybersecurity and Privacy.

How Georgia Tech Tracks Flaws Introduced by AI Coding Tools

The Vibe Security Radar aims to track vulnerabilities directly introduced by AI coding tools that made it into public advisories, such as the CVE.org, the US National Vulnerability Database (NVD), GitHub Advisory Database (GHSA), Open Source Vulnerabilities (OSV), RustSec and others.

Speaking to Infosecurity, Hanqing Zhao, founder of the Vibe Security Radar, “Everyone is saying AI code is insecure, but nobody is actually tracking it. We want real numbers. Not benchmarks, not hypotheticals, real vulnerabilities affecting real users.”

He emphasized that this tracking work was fundamental now that more people have stated vibe coding entire projects “straight to production.”

“Realistically, even teams that do code review aren't going to catch everything when half the codebase is machine-generated,” he added.

50 Vibe Coding Tool Covered, 74 Vulnerabilities Tracked

Zhao claimed that his team tracks approximately 50 AI-assisted coding tools, including Claude Code, GitHub Copilot, Cursor, Devin, Windsurf, Aider, Amazon Q and Google Jules.

To develop the Vibe Security Radar dashboard, researchers first pull data from public vulnerability databases, find the commit that fixed each vulnerability, then trace backwards to find who introduced the bug in the first place.

“If that commit has an AI tool's signature on it, like a co-author tag or a bot email, we flag it,” Zhao told Infosecurity.

Finally, the team uses AI agents to “understand the root cause of each vulnerability and determine whether AI-generated code contributed to it.”

“The agents have access to the actual Git repository and commit history, so they can do a real investigation, not just pattern matching,” he said.

Out of the 74 confirmed cases of CVEs that were directly due to the use of AI coding tools, Claude Code showed up the most, but Zhao noted that this is mostly because the Anthropic tool “always leaves a signature.”

“Tools like Copilot's inline suggestions leave no trace at all, so they're harder to catch,” he added.

This domination of Claud Code-introduced flaws could also come from the widespread use of the tool in the software development community.

Read now: UK NCSC Head Urges Industry to Develop Vibe Coding Safeguards

Open-Source Projects Hide Most AI-Linked Flaws

However, Zhao admitted that the real number of CVEs due to the use of AI coding tools “is almost certainly higher” than the one shown on the Vibe Security Radar dashboard.

“These are just the cases that leave metadata traces. Based on what we see in projects like that, we estimate five to 10 times what we currently detect, roughly 400 to 700 cases across the open-source ecosystem,” he said.

“Take OpenClaw for example. It has over 300 security advisories, and we know the project relies heavily on vibe coding. But most of the AI tool traces have been stripped by the authors, so we can only confirm around 20 cases with clear AI signals.”

Additionally, there are a lot of vulnerabilities that never get public identifiers (e.g. CVE or GHSA number), which therefore cannot be tracked as easily.

Furthermore, Zhao is convinced that the number of vulnerabilities induced by AI coding tools is “only going to grow.”

“Last month, Claude Code alone accounted for over 4% of public commits on GitHub and that number is still climbing. More AI code means more AI-introduced vulnerabilities,” he said.

The Vibe Security Radar is a long-term project that he and his team will keep improving.

“Right now, we rely on metadata like co-author tags and bot emails, but people strip those. The next step is looking at the bigger picture: the project as a whole, commit patterns and the overall coding style. AI-written code has a recognizable feel to it. We're working on models that can pick up on those signals without needing any explicit metadata,” he concluded.

Image credit: aileenchik / Shutterstock.com

Read more: Palo Alto Networks Introduces New Vibe Coding Security Governance Framework